Authors

Bas Jansen & Felienne Hermans

Abstract

In previous work, code smells have been adapted to be applicable on spreadsheet formulas. The smell detection algorithm used in this earlier study was validated on a small dataset of industrial spreadsheets by interviewing the users of these spreadsheets and asking them about their opinion about the found smells.

In this paper a more in depth validation of the algorithm is done by analyzing a set of spreadsheets of which users indicated whether or not they are smelly. This new dataset gives us the unique possibility to get more insight in how we can distinguish 'bad' spreadsheets from 'good' spreadsheets.

We do that in two ways: For both the smelly and non smelly spreadsheets we

- have calculated the metrics that detect the smells and

- have calculated metrics with respect to size, level of coupling, and the use of functions.

The results show that indeed the metrics for the smells decrease in spreadsheets that are not smelly. With respect to size we found to our surprise that the improved spreadsheets were not smaller, but bigger. With regard to coupling and the use of functions both datasets are similar. It indicates that it is difficult to use metrics with respect to size, degree of coupling or use of functions to draw conclusions on the complexity of a spreadsheet.

Sample

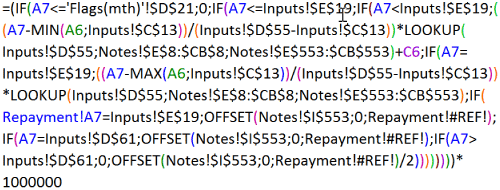

Having many nested conditional operations is considered to be a threat to code readability. The same is true for spreadsheet formulas. The Conditional Complexity smell measures the number of conditionals contained by a formula - in this example 7 nested IF functions. The formula also contains reference errors (#REF!).

Publication

2015, International Conference on Software Maintenance and Evolution, September, Pages 372-380

Full article

Code smells in spreadsheet formulas revisited on an industrial dataset